Architecting Trust: A Dive into AAISM Domain 3 – AI Technologies and Controls

Architecting operational integrity through secure-by-design frameworks, robust data veracity controls, and continuous lifecycle monitoring to ensure AI systems remain secure, ethical, and trustworthy.

TRAININGISACA- AAISMAAISM DOMAIN 3

Harshaun Singh

2/24/20263 min read

Architecting Trust: A Dive into AAISM Domain 3 – AI Technologies and Controls

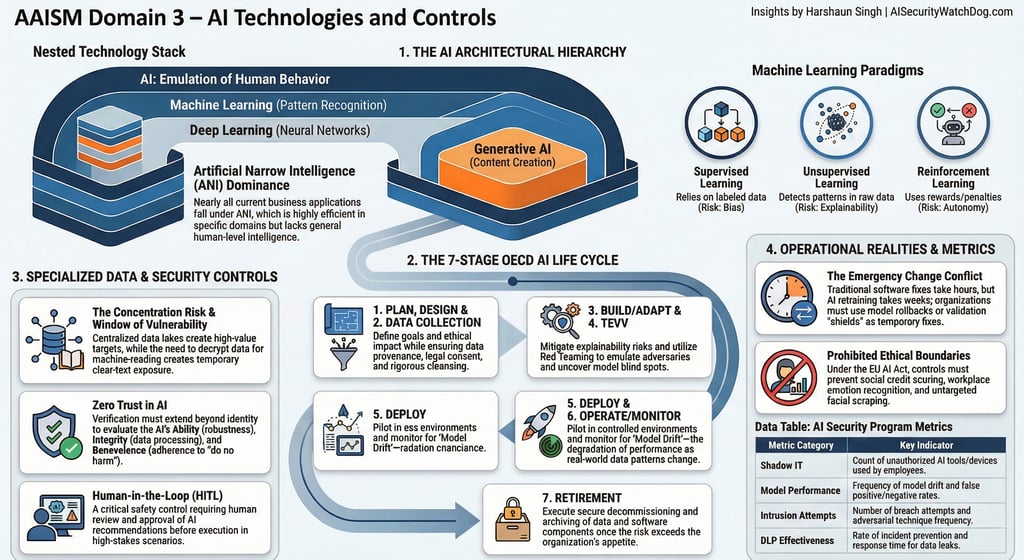

While Domain 1 established the governance strategy and Domain 2 addressed risk management, Domain 3: AI Technologies and Controls provides the technical blueprint for secure implementation. In this domain, we move beyond policies to the underlying architecture, lifecycle management, and specific security "guardrails" required to protect an organization's AI ecosystem.

--------------------------------------------------------------------------------

1. AI Architecture and Design: Beyond the "Black Box"

To secure an AI implementation, one must first understand its structural hierarchy. AI technology is often categorized in nested levels: Artificial Intelligence (the broad emulation of human behavior), Machine Learning (learning from data without explicit programming), Deep Learning (using multi-layered neural networks), and Generative AI (creating new content).

Capabilities: Currently, nearly all business applications are Artificial Narrow Intelligence (ANI), which is highly accurate within a specific domain but lacks general human intelligence.

The "Black Box" Problem: Deep Learning models, with their complex hidden layers, often produce results that are practically untraceable. This complexity is a inherent vulnerability; if a decision cannot be traced, it cannot be fully audited for bias or tampering.

Secure by Design (SBD): Organizations must adopt AI Secure by Design principles, taking ownership of security outcomes and embracing radical transparency from the inception of the model.

2. AI Life Cycle Management: The Retraining Conflict

The OECD AI Life Cycle provides a seven-phase framework: Plan and Design, Collect and Process Data, Build/Adapt Models, Test and Validate (TEVV), Deploy, Operate, and finally, Retire.

AI Red Teaming: During the TEVV phase, organizations should emulate real-world adversaries to uncover blind spots and validate security assumptions.

The Emergency Change Management Conflict: A critical strategic clash exists in AI operations. While a traditional software bug can be patched in hours, a corrupted AI model (e.g., one suffering from data poisoning) may require retraining, which can take weeks.

Resilience Strategies: Because of this delay, organizations must have the capability to roll back to a verified model version or implement output sanitization as a temporary shield.

3. Data Controls: The Lifeblood of AI

In AI, data is the raw material that forms the system's logic. Therefore, data controls must be more robust than in traditional IT.

Veracity and Integrity: Veracity (quality and accuracy) is the most critical characteristic for security; if data is flawed, the AI will learn incorrect logic, leading to "hallucinations".

Specialized Risks:

Concentration Risk: Centralized data lakes become prime targets for attackers.

The Window of Vulnerability: AI training often requires data to be decrypted into clear text to be machine-readable, creating a temporary state where sensitive information is exposed.

Access Control: The principle of least privilege must be applied strictly to training datasets, as data scientists may inadvertently broaden access during exploration.

4. Privacy, Ethics, Trust, and Safety

These controls share a common focus: preventing harm to individuals and society.

Human-in-the-Loop (HITL): This is a vital safety net where a person reviews and approves an AI recommendation before a critical action is taken (e.g., a doctor approving an AI-generated diagnosis).

Prohibited Uses: Under frameworks like the EU AI Act, certain AI uses are forbidden, including social credit scoring and emotion recognition in workplaces or schools.

Privacy Rights: AI must be designed to respect the right to be forgotten and require specific user consent for training, rather than relying on general terms of service.

5. Security Monitoring and Controls

Continuous monitoring is essential because AI is probabilistic and models naturally degrade over time.

Zero Trust for AI: Beyond standard access management, Zero Trust in AI involves verifying the AI's Ability (reliability), Integrity (lack of manipulation), and Benevolence (adherence to "do no harm").

Model Drift: Monitoring must track performance against quantitative thresholds. If accuracy drops below a preset level (e.g., 85%), it should automatically trigger a retraining protocol.

Shadow AI: Organizations must use tools like Cloud Access Security Brokers (CASBs) and user education to identify and block the use of unapproved AI applications by employees.

--------------------------------------------------------------------------------

💡 Knowledge Check

1. What is the fundamental conflict in AI emergency change management compared to traditional IT?

Answer: Traditional IT patches take hours, but correcting a fundamental AI flaw (like data poisoning) requires retraining, which can take weeks.

2. Which "V" of Big Data is considered the most critical defense against AI hallucinations?

Answer: Veracity (quality, accuracy, and integrity of the data).

3. What is "Model Drift" and how is it managed?

Answer: It is the natural degradation of a model's performance as real-world data patterns change. It is managed through continuous monitoring against preset quantitative thresholds.

4. Name two "unacceptable" uses of AI according to ethical controls and the EU AI Act.

Answer: Social credit scoring by public authorities and untargeted scraping of facial images from the internet.

5. What is the "Window of Vulnerability" in the context of AI data controls?

Answer: The period during model training when sensitive data must be decrypted into clear text to be machine-readable, exposing it to potential unauthorized access.

WatchDog Wire

Bridging the gap between AI innovation and cybersecurity. Explore our AI Risk Intelligence & Governance Briefs.

AI Security WatchDog

AI Risk Intelligence & Governance Briefs. Weekly insights on AI Incidents, regulations and vendor risks.

Contact

info@AISecurityWatchdog.com

Subscribe

© 2025. All rights reserved.