5 Game-Changing Takeaways from the CSA AI Controls Matrix (AICM)

The Cloud Security Alliance (CSA) developed the AI Controls Matrix (AICM) to provide a structured framework for securing Generative AI and Large Language Models.

AI RISK INTELLIGENCEAI GOVERNANCECSA AICM

Harshaun Singh

2/24/20264 min read

The Wild West of AI Governance Just Got a Map: 5 Game-Changing Takeaways from the CSA AI Controls Matrix (AICM)

The enterprise race to integrate Generative AI has moved at a pace that makes the early days of cloud migration look like a slow crawl. We are currently in the "land grab" phase of GenAI, where organizations are frantically layering Large Language Models (LLMs) and orchestration frameworks onto their tech stacks to capture productivity gains. Yet, a fundamental question haunts the C-suite: in this multi-layered supply chain, who is actually holding the shield?

The uncomfortable truth is that the "Cloud of Yesterday" was never built for the "AI of Tomorrow." Traditional security frameworks are failing to account for the unique failure modes of AI—emergent behavior, model inversion, and training data poisoning. This ambiguity creates a vacuum of responsibility, where AI Customers (AICs) assume their providers have secured the model, while providers assume the customer is managing the prompts.

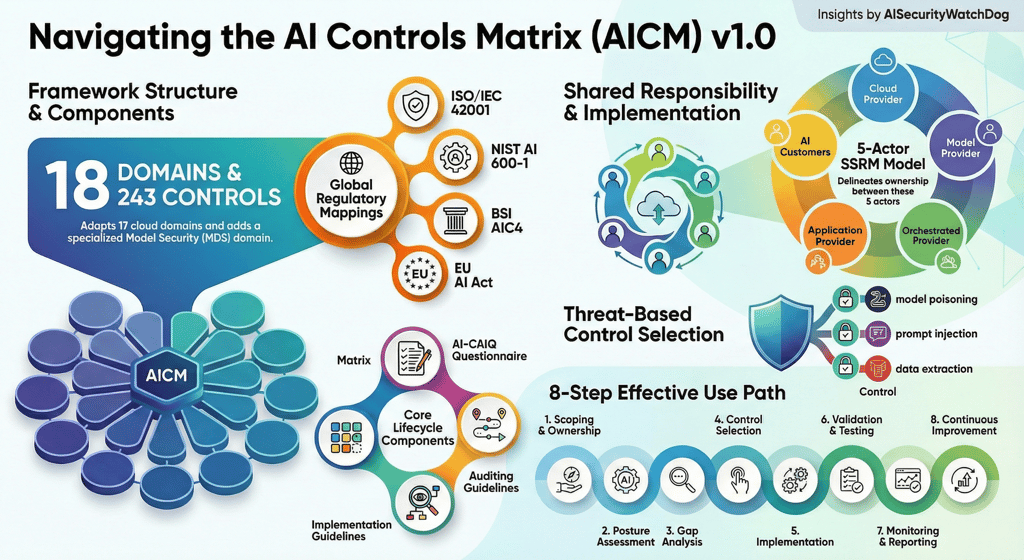

Enter the Cloud Security Alliance (CSA) and its new AI Controls Matrix (AICM) v1.0. Think of this not just as another checklist, but as a fundamental re-architecting of trust. Distilling the expertise of a global multidisciplinary coalition, this framework translates high-level ethics into 243 actionable security controls. It is the industry’s "Rosetta Stone," and here are the five strategic takeaways that change the game for CTOs and compliance officers alike.

--------------------------------------------------------------------------------

1. The AI Supply Chain is Much Longer Than You Think: The 5-Layer SSRM

The most significant strategic shift in the AICM is the expansion of the Shared Security Responsibility Model (SSRM). The old binary of "Provider vs. Customer" is dead. In the GenAI era, a single application may involve five distinct actors, each with a different slice of the risk pie.

Ignoring these granular distinctions leads to "responsibility leakage." If an organization fails to recognize the difference between an Orchestrated Service Provider (OSP) and a Model Provider (MP), they risk catastrophic gaps in areas like API security or prompt management, potentially leading to multi-million dollar data exfiltration events.

The Five Key Roles in the AI SSRM:

Cloud Service Providers (CSPs): Accountable for the GenAI OPS/Processing Infrastructure (PI) layer, including physical datacenter security and hardware maintenance.

Model Providers (MPs): Responsible for the model development lifecycle, foundational model validation, and base model training data curation.

Orchestrated Service Providers (OSPs): Responsible for the aggregation and orchestration of multiple AI services, API management, and service integration.

Application Providers (APs): Responsible for end-user applications, including application-level guardrails and output validation.

AI Customers (AICs): Responsible for the secure consumption of services, engineering governance, and acceptable use enforcement.

--------------------------------------------------------------------------------

2. "Model Security" (MDS) is Now a Dedicated Discipline

While the AICM is built on the foundation of the CSA Cloud Controls Matrix (CCM), it introduces a brand-new, 18th domain: Model Security (MDS). Listed as Domain 14 in the documentation, it signifies a shift from securing "servers" to securing "intelligence."

This domain consists of 13 specific control specifications designed to mitigate threats that general IT governance cannot touch—such as training pipeline compromise and adversarial attacks. By isolating MDS, the CSA forces organizations to address the mathematical and architectural vulnerabilities inherent in the model itself.

"The Model Security (MDS) domain comprises thirteen (13) control specifications that are unique to AI systems and focus on securing the entire model development lifecycle, from training pipeline security to model artifact integrity, documentation, and adversarial robustness."

From a strategic perspective, ignoring the MDS domain is a reputational ticking time bomb. Without controls for "emergent behavior" or "model integrity checks," organizations risk deploying "black box" systems that could generate unsafe outputs or leak proprietary training data.

--------------------------------------------------------------------------------

3. A Compliance "Rosetta Stone" for Global Regulators

For compliance officers, the AICM offers a way out of "framework fatigue." Instead of running redundant audits for the US, EU, and international markets, the AICM features a Scope Applicability matrix that maps its 243 controls directly to the world's most prominent standards:

ISO/IEC 42001

NIST AI 600-1

BSI AIC4

The EU AI Act

Critically, the AICM provides a Gap Level analysis (categorized as No Gap, Partial Gap, or Full Gap). This allows a compliance team to see exactly how much extra work is required to satisfy the EU AI Act once they have met the AICM baseline. This "apply once, satisfy many" approach transforms compliance from a cost center into a competitive advantage.

--------------------------------------------------------------------------------

4. Security Must Follow the "Six Phases" of the AI Lifecycle

The AICM rejects the notion that security is a "gate" at the end of a deployment pipeline. Instead, it maps controls across six distinct phases:

Preparation: Data curation and resource provisioning.

Development: Model architecture and training.

Evaluation/Validation: Red-teaming and validation.

Deployment: Orchestration and AI services supply chain.

Delivery: Operations and continuous monitoring.

Service Retirement: Archival and model disposal.

The strategic outlier here is Service Retirement. Most organizations overlook the decommissioning phase, yet the AICM identifies it as a high-risk zone for intellectual property theft and data privacy violations. Proper "model disposal" and "data deletion" are not just administrative tasks; they are the final line of defense against a decommissioned model being exfiltrated or reverse-engineered by competitors.

--------------------------------------------------------------------------------

5. The AI-CAIQ: The New Standard for Vendor Trust

The AI Consensus Assessment Initiative Questionnaire (AI-CAIQ) is the matrix’s most practical tool. It is a set of structured questions that allows providers to document their security posture with "Yes/No" clarity.

For vendors (MPs, OSPs, and APs), the AI-CAIQ can be submitted to the CSA STAR for AI Registry for a Level 1 STAR self-attestation. This drastically reduces the sales cycle by providing a transparent, ready-made response to security questionnaires. It uses specific columns like the Service Provider Implementation Description and Service Customer Responsibilities to ensure that both parties know exactly where the boundary of trust lies.

"The CSA STAR for AI program enables organizational transparency and reduces the number of AI security questionnaires providers should complete for customers."

--------------------------------------------------------------------------------

Conclusion: From Chaos to Control

The release of the AICM v1.0 marks the end of the "Wild West" era of AI deployment. We are entering a phase where accountability is non-negotiable and the supply chain must be transparent. By distilling the GenAI stack into 243 controls across a 5-layer responsibility model, the CSA has provided the blueprint for a resilient, trustworthy AI ecosystem.

In the rush to deploy the next revolutionary model, every tech leader must ask: Is your organization's current security framework built for the cloud of yesterday, or the AI of tomorrow?

For more information and to access the full framework, visit the official CSA AICM Webpage.

WatchDog Wire

Bridging the gap between AI innovation and cybersecurity. Explore our AI Risk Intelligence & Governance Briefs.

AI Security WatchDog

AI Risk Intelligence & Governance Briefs. Weekly insights on AI Incidents, regulations and vendor risks.

Contact

info@AISecurityWatchdog.com

Subscribe

© 2025. All rights reserved.